Cashflow 101 Text Was Entered Incorrectly. Try Again. Type the Text

Tutorial: Text Nomenclature in Python Using spaCy

Published: April 16, 2019

Text is an extremely rich source of data. Each minute, people transport hundreds of millions of new emails and text messages. There's a veritable mount of text data waiting to be mined for insights. Just information scientists who want to glean meaning from all of that text data face up a claiming: information technology is difficult to clarify and process considering it exists in unstructured course.

In this tutorial, we'll take a expect at how nosotros can transform all of that unstructured text data into something more useful for assay and natural language processing, using the helpful Python package spaCy (documentation).

In one case we've done this, nosotros'll exist able to derive meaningful patterns and themes from text data. This is useful in a broad multifariousness of data scientific discipline applications: spam filtering, back up tickets, social media analysis, contextual advertising, reviewing client feedback, and more.

Specifically, we're going to take a high-level await at natural language processing (NLP). Then we'll work through some of the important basic operations for cleaning and analyzing text information with spaCy. Then we'll dive into text nomenclature, specifically Logistic Regression Classification, using some real-world data (text reviews of Amazon's Alexa smart home speaker).

What is Natural Language Processing?

Natural language processing (NLP) is a branch of machine learning that deals with processing, analyzing, and sometimes generating human speech ("natural language").

In that location'due south no dubiety that humans are nonetheless much meliorate than machines at deterimining the significant of a cord of text. But in data science, nosotros'll oft run into data sets that are far likewise large to be analyzed past a human in a reasonable corporeality of fourth dimension. We may besides encounter situations where no human is available to analyze and answer to a slice of text input. In these situations, we can utilize natural language processing techniques to help machines become some understanding of the text's meaning (and if necessary, answer accordingly).

For example, natural language processing is widely used in sentiment analysis, since analysts are oft trying to make up one's mind the overall sentiment from huge volumes of text data that would be time-consuming for humans to comb through. It's also used in advertizing matching—determining the field of study of a body of text and assigning a relevant advertisement automatically. And information technology's used in chatbots, phonation assistants, and other applications where machines need to understand and speedily reply to input that comes in the form of natural human language.

Analyzing and Processing Text With spaCy

spaCy is an open-source tongue processing library for Python. It is designed specially for production use, and it can help us to build applications that process massive volumes of text efficiently. Kickoff, let'due south accept a look at some of the basic analytical tasks spaCy can handle.

Installing spaCy

We'll need to install spaCy and its English-language model before proceeding further. We can practise this using the following command line commands:

pip install spacy

python -m spacy download en

We can as well use spaCy in a Juypter Notebook. It's not one of the pre-installed libraries that Jupyter includes past default, though, and so we'll need to run these commands from the notebook to go spaCy installed in the correct Anaconda directory. Notation that we apply ! in front of each command to let the Jupyter notebook know that it should exist read as a control line command.

!pip install spacy

!python -m spacy download en

Tokenizing the Text

Tokenization is the procedure of breaking text into pieces, called tokens, and ignoring characters similar punctuation marks (,. " ') and spaces. spaCy's tokenizer takes input in form of unicode text and outputs a sequence of token objects.

Let's take a await at a elementary example. Imagine we have the following text, and nosotros'd similar to tokenize it:

When learning information science, y'all shouldn't get discouraged.

Challenges and setbacks aren't failures, they're only part of the journey.

There are a couple of unlike ways we tin can appoach this. The starting time is called word tokenization, which means breaking up the text into individual words. This is a critical step for many language processing applications, every bit they oft crave input in the form of individual words rather than longer strings of text.

In the code beneath, we'll import spaCy and its English-language model, and tell it that we'll be doing our natural language processing using that model. Then we'll assign our text cord to text. Using nlp(text), we'll process that text in spaCy and assign the issue to a variable called my_doc.

At this bespeak, our text has already been tokenized, but spaCy stores tokenized text as a doc, and we'd like to look at it in list grade, then we'll create a for loop that iterates through our doc, adding each give-and-take token it finds in our text string to a listing called token_list so that we can have a better look at how words are tokenized.

# Give-and-take tokenization from spacy.lang.en import English # Load English language tokenizer, tagger, parser, NER and discussion vectors nlp = English() text = """When learning information science, yous shouldn't become discouraged! Challenges and setbacks aren't failures, they're just part of the journey. You've got this!""" # "nlp" Object is used to create documents with linguistic annotations. my_doc = nlp(text) # Create listing of discussion tokens token_list = [] for token in my_doc: token_list.suspend(token.text) print(token_list) ['When', 'learning', 'information', 'science', ',', 'you', 'should', "n't", 'get', 'discouraged', '!', '\northward', 'Challenges', 'and', 'setbacks', 'are', "due north't", 'failures', ',', 'they', "'re", 'only', 'role', 'of', 'the', 'journey', '.', 'You', "'ve", 'got', 'this', '!'] As we can see, spaCy produces a list that contains each token as a separate detail. Notice that it has recognized that contractions such as shouldn't actually represent two distinct words, and it has thus cleaved them down into ii distinct tokens.

Fist we need to load language dictionaries, Here in abve example, we are loading english lexicon using English() class and creating nlp nlp object. "nlp" object is used to create documents with linguistic annotations and various nlp properties. Afterwards creating certificate, we are creating a token listing.

If we desire, we tin can also interruption the text into sentences rather than words. This is called judgement tokenization. When performing sentence tokenization, the tokenizer looks for specific characters that fall between sentences, like periods, exclaimation points, and newline characters. For sentence tokenization, we will utilise a preprocessing pipeline because judgement preprocessing using spaCy includes a tokenizer, a tagger, a parser and an entity recognizer that we need to access to correctly identify what's a sentence and what isn't.

In the code below,spaCy tokenizes the text and creates a Doc object. This Doctor object uses our preprocessing pipeline'due south components tagger,parser and entity recognizer to break the text downward into components. From this pipeline nosotros can extract any component, but here we're going to access sentence tokens using the sentencizer component.

# sentence tokenization # Load English tokenizer, tagger, parser, NER and word vectors nlp = English() # Create the pipeline 'sentencizer' component sbd = nlp.create_pipe('sentencizer') # Add the component to the pipeline nlp.add_pipe(sbd) text = """When learning information science, y'all shouldn't get discouraged! Challenges and setbacks aren't failures, they're only role of the journey. You've got this!""" # "nlp" Object is used to create documents with linguistic annotations. doc = nlp(text) # create list of sentence tokens sents_list = [] for sent in doc.sents: sents_list.suspend(sent.text) print(sents_list) ["When learning data scientific discipline, you shouldn't get discouraged!", "\nChallenges and setbacks aren't failures, they're just part of the journey.", "You've got this!"] Once again, spaCy has correctly parsed the text into the format we desire, this time outputting a list of sentences constitute in our source text.

Cleaning Text Data: Removing Stopwords

Most text data that we work with is going to incorporate a lot of words that aren't actually useful to us. These words, called stopwords, are useful in human speech communication, but they don't have much to contribute to data analysis. Removing stopwords helps us eliminate noise and lark from our text data, and too speeds up the time analysis takes (since there are fewer words to process).

Permit's take a expect at the stopwords spaCy includes by default. We'll import spaCy and assign the stopwords in its English-language model to a variable called spacy_stopwords so that nosotros tin take a look.

#Finish words #importing terminate words from English language. import spacy spacy_stopwords = spacy.lang.en.stop_words.STOP_WORDS #Printing the total number of stop words: print('Number of stop words: %d' % len(spacy_stopwords)) #Press first ten stop words: print('Start ten stop words: %s' % list(spacy_stopwords)[:20]) Number of finish words: 312 Showtime ten stop words: ['was', 'various', 'fifty', "'south", 'used', 'one time', 'because', 'himself', 'can', 'name', 'many', 'seems', 'others', 'something', 'anyhow', 'nowhere', 'serious', 'xl', 'he', 'now'] As nosotros tin come across, spaCy'due south default list of stopwords includes 312 full entries, and each entry is a single word. We tin can also see why many of these words wouldn't be useful for data assay. Transition words like nonetheless, for instance, aren't necessary for understanding the bones meaning of a sentence. And other words like somebody are too vague to exist of much utilise for NLP tasks.

If we wanted to, nosotros could as well create our ain customized listing of stopwords. Only for our purposes in this tutorial, the default listing that spaCy provides volition be fine.

Removing Stopwords from Our Data

Now that we've got our list of stopwords, allow'south use it to remove the stopwords from the text string nosotros were working on in the previous section. Our text is already stored in the variable text, and then nosotros don't demand to define that once more.

Instead, we'll create an empty list called filtered_sent and so iterate through our doc variable to look at each tokenized word from our source text. spaCy includes a bunch of helpful token attributes, and we'll apply one of them called is_stop to place words that aren't in the stopword list and then append them to our filtered_sent listing.

from spacy.lang.en.stop_words import STOP_WORDS #Implementation of finish words: filtered_sent=[] # "nlp" Object is used to create documents with linguistic annotations. physician = nlp(text) # filtering terminate words for word in doc: if word.is_stop==False: filtered_sent.suspend(word) print("Filtered Sentence:",filtered_sent) Filtered Sentence: [learning, data, science, ,, discouraged, !, , Challenges, setbacks, failures, ,, journeying, ., got, !] It's not too hard to run into why stopwords can be helpful. Removing them has boiled our original text downwards to just a few words that give us a adept idea of what the sentences are discussing: learning data science, and discouraging challenges and setbacks along that journey.

Lexicon Normalization

Lexicon normalization is another step in the text data cleaning process. In the large picture, normalization converts loftier dimensional features into depression dimensional features which are appropriate for any machine learning model. For our purposes hither, we're merely going to look at lemmatization, a way of processing words that reduces them to their roots.

Lemmatization

Lemmatization is a style of dealing with the fact that while words like connect, connection, connecting, continued, etc. aren't exactly the same, they all accept the same essential meaning: connect. The differences in spelling accept grammatical functions in voice communication, just for machine processing, those differences can be disruptive, so we need a way to change all the words that are forms of the word connect into the word connect itself.

One method for doing this is called stemming. Stemming involves merely lopping off easily-identified prefixes and suffixes to produce what'south oft the simplest version of a give-and-take. Connection, for example, would have the -ion suffix removed and be correctly reduced to connect. This kind of simple stemming is often all that'south needed, but lemmatization—which actually looks at words and their roots (called lemma) as described in the dictionary—is more precise (as long equally the words exist in the lexicon).

Since spaCy includes a build-in manner to suspension a word downwardly into its lemma, we can simply employ that for lemmatization. In the following very simple example, we'll use .lemma_ to produce the lemma for each word we're analyzing.

# Implementing lemmatization lem = nlp("run runs running runner") # finding lemma for each discussion for word in lem: print(word.text,discussion.lemma_) run run runs run running run runner runner Part of speech (POS) Tagging

A word's part of speech defines its function inside a sentence. A substantive, for case, identifies an object. An adjective describes an object. A verb describes action. Identifying and tagging each give-and-take's part of speech in the context of a sentence is called Part-of-Speech communication Tagging, or POS Tagging.

Permit's endeavour some POS tagging with spaCy! Nosotros'll need to import its en_core_web_sm model, considering that contains the dictionary and grammatical information required to practise this analysis. So all we need to practise is load this model with .load() and loop through our new docs variable, identifying the office of oral communication for each word using .pos_.

(Note the u in u"All is well that ends well." signifies that the cord is a Unicode cord.)

# POS tagging # importing the model en_core_web_sm of English for vocabluary, syntax & entities import en_core_web_sm # load en_core_web_sm of English for vocabluary, syntax & entities nlp = en_core_web_sm.load() # "nlp" Objectis used to create documents with linguistic annotations. docs = nlp(u"All is well that ends well.") for give-and-take in docs: print(word.text,word.pos_) All DET is VERB well ADV that DET ends VERB well ADV . PUNCT Hooray! spaCy has correctly identified the lexical category for each word in this sentence. Being able to identify parts of voice communication is useful in a variety of NLP-related contexts, because information technology helps more accurately understand input sentences and more accurately construct output responses.

Entity Detection

Entity detection, besides called entity recognition, is a more advanced class of language processing that identifies important elements like places, people, organizations, and languages within an input string of text. This is really helpful for speedily extracting information from text, since you can quickly option out important topics or indentify central sections of text.

Let's try out some entity detection using a few paragraphs from this recent article in the Washington Postal service. We'll use .characterization to grab a characterization for each entity that'southward detected in the text, and then we'll take a look at these entities in a more visual format using spaCy's displaCy visualizer.

#for visualization of Entity detection importing displacy from spacy: from spacy import displacy nytimes= nlp(u"""New York City on Tuesday declared a public health emergency and ordered mandatory measles vaccinations amid an outbreak, becoming the latest national flash point over refusals to inoculate confronting unsafe diseases. At least 285 people have contracted measles in the urban center since September, by and large in Brooklyn's Williamsburg neighborhood. The guild covers four Zip codes there, Mayor Pecker de Blasio (D) said Tuesday. The mandate orders all unvaccinated people in the expanse, including a concentration of Orthodox Jews, to receive inoculations, including for children every bit young as vi months erstwhile. Anyone who resists could be fined up to $1,000.""") entities=[(i, i.label_, i.label) for i in nytimes.ents] entities [(New York City, 'GPE', 384), (Tuesday, 'DATE', 391), (At least 285, 'Fundamental', 397), (September, 'Engagement', 391), (Brooklyn, 'GPE', 384), (Williamsburg, 'GPE', 384), (four, 'CARDINAL', 397), (Bill de Blasio, 'PERSON', 380), (Tuesday, 'Engagement', 391), (Orthodox Jews, 'NORP', 381), (6 months onetime, 'DATE', 391), (upwards to $1,000, 'MONEY', 394)] Using this technique, nosotros can identify a variety of entities within the text. The spaCy documentation provides a total list of supported entity types, and we can see from the short example above that it's able to identify a variety of different entity types, including specific locations (GPE), appointment-related words (DATE), of import numbers (CARDINAL), specific individuals (PERSON), etc.

Using displaCy we can also visualize our input text, with each identified entity highlighted past color and labeled. We'll use style = "ent" to tell displaCy that nosotros desire to visualize entities hither.

displacy.render(nytimes, way = "ent",jupyter = True) New York Metropolis GPE on Tuesday Appointment declared a public health emergency and ordered mandatory measles vaccinations amongst an outbreak, condign the latest national flash betoken over refusals to inoculate confronting dangerous diseases. At least 285 CARDINAL people have contracted measles in the city since September Engagement , generally in Brooklyn GPE 's Williamsburg GPE neighborhood. The order covers iv CARDINAL Zip codes there, Mayor Bill de Blasio PERSON (D) said Tuesday Appointment .The mandate orders all unvaccinated people in the area, including a concentration of Orthodox Jews NORP , to receive inoculations, including for children equally young as half dozen months old DATE . Anyone who resists could exist fined up to $one,000 Money .

Dependency Parsing

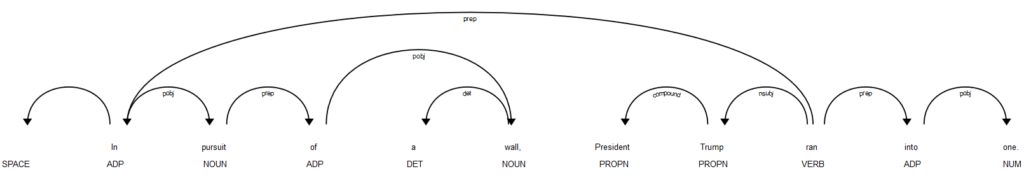

Depenency parsing is a language processing technique that allows us to better make up one's mind the pregnant of a sentence by analyzing how it's constructed to determine how the individual words relate to each other.

Consider, for example, the judgement "Bill throws the brawl." We accept two nouns (Bill and ball) and one verb (throws). Merely we can't just look at these words individually, or we may terminate upward thinking that the ball is throwing Neb! To understand the sentence correctly, we need to expect at the word order and sentence structure, not just the words and their parts of speech.

Doing this is quite complicated, but thankfully spaCy will take care of the work for u.s.! Below, allow'due south give spaCy some other short sentence pulled from the news headlines. Then we'll use another spaCy called noun_chunks, which breaks the input down into nouns and the words describing them, and iterate through each chunk in our source text, identifying the discussion, its root, its dependency identification, and which chunk it belongs to.

docp = nlp (" In pursuit of a wall, President Trump ran into one.") for chunk in docp.noun_chunks: print(chunk.text, chunk.root.text, chunk.root.dep_, clamper.root.head.text) pursuit pursuit pobj In a wall wall pobj of President Trump Trump nsubj ran This output tin can be a lilliputian scrap difficult to follow, but since nosotros've already imported the displaCy visualizer, we can utilise that to view a dependency diagraram using fashion = "dep" that's much easier to understand:

displacy.render(docp, style="dep", jupyter= Truthful)

Of course, we tin can also check out spaCy's documentation on dependency parsing to get a better understanding of the different labels that might get applied to our text depending on how each sentence is intrepreted.

Give-and-take Vector Representation

When we're looking at words alone, it's difficult for a automobile to empathise connections that a human would empathise immediately. Engine and car, for example, have what might seem like an obvious connection (cars run using engines), but that link is not so obvious to a computer.

Thankfully, there's a way we can correspond words that captures more of these sorts of connections. A give-and-take vector is a numeric representation of a give-and-take that commuicates its relationship to other words.

Each give-and-take is interpreted as a unique and lenghty array of numbers. Y'all tin can think of these numbers equally being something like GPS coordinates. GPS coordinates consist of two numbers (latitude and longitude), and if we saw two sets GPS coordinates that were numberically close to each other (like 43,-lxx, and 44,-70), we would know that those 2 locations were relatively close together. Word vectors work similarly, although there are a lot more than two coordinates assigned to each word, so they're much harder for a human to eyeball.

Using spaCy'south en_core_web_sm model, let's take a wait at the length of a vector for a single word, and what that vector looks like using .vector and .shape.

import en_core_web_sm nlp = en_core_web_sm.load() mango = nlp(u'mango') print(mango.vector.shape) impress(mango.vector) (96,) [ one.0466383 -i.5323697 -0.72177905 -two.4700649 -0.2715162 1.1589639 1.7113379 -0.31615403 -2.0978343 1.837553 one.4681302 ii.728043 -2.3457408 -5.17184 -4.6110015 -0.21236466 -0.3029521 4.220028 -0.6813917 two.4016762 -1.9546705 -0.85086954 1.2456163 1.5107994 0.4684736 3.1612053 0.15542296 2.0598564 three.780035 four.6110964 0.6375268 -i.078107 -0.96647096 -1.3939928 -0.56914186 0.51434743 ii.3150034 -0.93199825 -2.7970662 -0.8540115 -3.4250052 4.2857723 2.5058174 -2.2150877 0.7860181 iii.496335 -0.62606215 -2.0213525 -4.47421 i.6821622 -6.0789204 0.22800982 -0.36950028 -4.5340714 -1.7978683 -2.080299 iv.125556 three.1852438 -3.286446 one.0892276 1.017115 i.2736416 -0.10613725 three.5102775 i.1902348 0.05483437 -0.06298041 0.8280688 0.05514218 0.94817173 -0.49377063 one.1512338 -0.81374085 -ane.6104267 1.8233354 -2.278403 -two.1321895 0.3029334 -i.4510616 -1.0584296 -3.5698352 -0.13046083 -0.2668339 1.7826645 0.4639858 -0.8389523 -0.02689964 2.316218 v.8155413 -0.45935947 4.368636 1.6603007 -3.1823301 -1.4959551 -0.5229269 1.3637555 ] There's no way that a man could look at that array and identify it every bit meaning "mango," but representing the word this way works well for machines, considering it allows united states to represent both the word'due south meaning and its "proximity" to other similar words using the coordinates in the array.

Text Classification

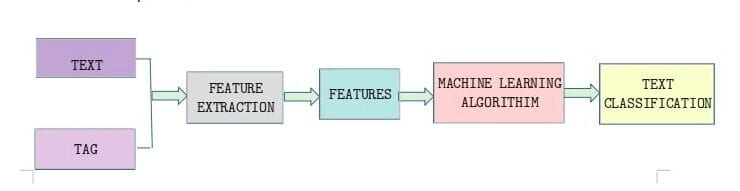

At present that we've looked at some of the cool things spaCy can do in full general, permit's look at at a bigger real-globe application of some of these tongue processing techniques: text classification. Quite often, nosotros may observe ourselves with a set of text data that nosotros'd like to classify according to some parameters (perhaps the subject of each snippet, for example) and text classification is what will help us to do this.

The diagram below illustrates the large-picture view of what we want to exercise when classifying text. First, we extract the features we want from our source text (and any tags or metadata it came with), and then we feed our cleaned information into a motorcar learning algorithm that do the classification for us.

Importing Libraries

We'll kickoff past importing the libraries we'll need for this task. We've already imported spaCy, only we'll also want pandas and scikit-acquire to help with our analysis.

import pandas as pd from sklearn.feature_extraction.text import CountVectorizer,TfidfVectorizer from sklearn.base import TransformerMixin from sklearn.pipeline import Pipeline Loading Data

Above, we have looked at some simple examples of text assay with spaCy, only now nosotros'll be working on some Logistic Regression Classification using scikit-acquire. To make this more realistic, we're going to utilize a real-world data prepare—this set of Amazon Alexa product reviews.

This information set comes equally a tab-separated file (.tsv). It has has five columns: rating, appointment, variation, verified_reviews, feedback.

rating denotes the rating each user gave the Alexa (out of v). date indicates the date of the review, and variation describes which model the user reviewed. verified_reviews contains the text of each review, and feedback contains a sentiment label, with ane cogent positive sentiment (the user liked it) and 0 denoting negative sentiment (the user didn't).

This dataset has consumer reviews of amazon Alexa products like Echos, Repeat Dots, Alexa Firesticks etc. What we're going to practice is develop a classification model that looks at the review text and predicts whether a review is positive or negative. Since this information set up already includes whether a review is positive or negative in the feedback column, nosotros can use those answers to train and test our model. Our goal hither is to produce an accurate model that we could and then use to procedure new user reviews and chop-chop determine whether they were positive or negative.

Permit's start by reading the information into a pandas dataframe so using the built-in functions of pandas to help u.s.a. take a closer wait at our data.

# Loading TSV file df_amazon = pd.read_csv ("datasets/amazon_alexa.tsv", sep="\t") # Acme 5 records df_amazon.head() | rating | date | variation | verified_reviews | feedback | |

|---|---|---|---|---|---|

| 0 | 5 | 31-Jul-18 | Charcoal Textile | Love my Echo! | 1 |

| 1 | 5 | 31-Jul-18 | Charcoal Cloth | Loved it! | 1 |

| 2 | iv | 31-Jul-eighteen | Walnut Terminate | Sometimes while playing a game, you can answer… | 1 |

| 3 | five | 31-Jul-18 | Charcoal Fabric | I accept had a lot of fun with this matter. My four … | 1 |

| 4 | v | 31-Jul-xviii | Charcoal Fabric | Music | 1 |

# shape of dataframe df_amazon.shape (3150, five) # View data information df_amazon.info() <form 'pandas.core.frame.DataFrame'> RangeIndex: 3150 entries, 0 to 3149 Data columns (total five columns): rating 3150 not-cypher int64 engagement 3150 non-null object variation 3150 non-null object verified_reviews 3150 non-null object feedback 3150 not-null int64 dtypes: int64(2), object(3) memory usage: 123.ane+ KB # Feedback Value count df_amazon.feedback.value_counts() 1 2893 0 257 Name: feedback, dtype: int64 Tokening the Data With spaCy

Now that we know what we're working with, let's create a custom tokenizer function using spaCy. Nosotros'll use this office to automatically strip information nosotros don't need, like stopwords and punctuation, from each review.

We'll start by importing the English models we demand from spaCy, as well as Python'due south string module, which contains a helpful list of all punctuation marks that we tin use in string.punctuation. We'll create variables that contain the punctuation marks and stopwords we want to remove, and a parser that runs input through spaCy'due south English module.

Then, we'll create a spacy_tokenizer() function that accepts a judgement as input and processes the sentence into tokens, performing lemmatization, lowercasing, and removing end words. This is similar to what we did in the examples before in this tutorial, but now nosotros're putting it all together into a unmarried function for preprocessing each user review we're analyzing.

import cord from spacy.lang.en.stop_words import STOP_WORDS from spacy.lang.en import English # Create our list of punctuation marks punctuations = cord.punctuation # Create our list of stopwords nlp = spacy.load('en') stop_words = spacy.lang.en.stop_words.STOP_WORDS # Load English tokenizer, tagger, parser, NER and word vectors parser = English() # Creating our tokenizer function def spacy_tokenizer(judgement): # Creating our token object, which is used to create documents with linguistic annotations. mytokens = parser(judgement) # Lemmatizing each token and converting each token into lowercase mytokens = [ give-and-take.lemma_.lower().strip() if word.lemma_ != "-PRON-" else word.lower_ for word in mytokens ] # Removing finish words mytokens = [ word for word in mytokens if word not in stop_words and word not in punctuations ] # return preprocessed list of tokens return mytokens Defining a Custom Transformer

To further clean our text data, we'll likewise want to create a custom transformer for removing initial and end spaces and converting text into lower case. Here, nosotros will create a custom predictors class wich inherits the TransformerMixin class. This grade overrides the transform, fit and get_parrams methods. We'll besides create a clean_text() role that removes spaces and converts text into lowercase.

# Custom transformer using spaCy class predictors(TransformerMixin): def transform(self, 10, **transform_params): # Cleaning Text return [clean_text(text) for text in X] def fit(self, 10, y=None, **fit_params): return cocky def get_params(self, deep=True): return {} # Basic function to clean the text def clean_text(text): # Removing spaces and converting text into lowercase return text.strip().lower() Vectorization Feature Engineering science (TF-IDF)

When nosotros classify text, we end up with text snippets matched with their respective labels. Simply nosotros can't simply apply text strings in our machine learning model; nosotros need a manner to convert our text into something that can be represented numerically merely like the labels (one for positive and 0 for negative) are. Classifying text in positive and negative labels is chosen sentiment analysis. Then we need a way to represent our text numerically.

Ane tool we can use for doing this is called Pocketbook of Words. BoW converts text into the matrix of occurrence of words within a given document. It focuses on whether given words occurred or not in the document, and it generates a matrix that nosotros might see referred to as a BoW matrix or a document term matrix.

Nosotros can generate a BoW matrix for our text data by using scikit-learn'southward CountVectorizer. In the code below, we're telling CountVectorizer to use the custom spacy_tokenizer office nosotros built as its tokenizer, and defining the ngram range we want.

N-grams are combinations of adjacent words in a given text, where northward is the number of words that incuded in the tokens. for case, in the judgement "Who will win the football world cup in 2022?" unigrams would be a sequence of single words such as "who", "will", "win" and then on. Bigrams would be a sequence of two face-to-face words such equally "who will", "will win", and so on. So the ngram_range parameter we'll apply in the code beneath sets the lower and upper bounds of the our ngrams (we'll be using unigrams). Then nosotros'll assign the ngrams to bow_vector.

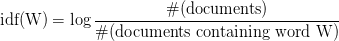

bow_vector = CountVectorizer(tokenizer = spacy_tokenizer, ngram_range=(1,one)) We'll also want to look at the TF-IDF (Term Frequency-Changed Document Frequency) for our terms. This sounds complicated, but information technology's simply a way of normalizing our Bag of Words(BoW) by looking at each word'due south frequency in comparing to the document frequency. In other words, information technology's a style of representing how important a item term is in the context of a given document, based on how many times the term appears and how many other documents that same term appears in. The college the TF-IDF, the more important that term is to that document.

We can represent this with the following mathematical equation:

Of form, nosotros don't take to summate that by paw! We can generate TF-IDF automatically using scikit-learn'due south TfidfVectorizer. Again, we'll tell it to use the custom tokenizer that we built with spaCy, and then we'll assign the consequence to the variable tfidf_vector.

tfidf_vector = TfidfVectorizer(tokenizer = spacy_tokenizer) Splitting The Data into Preparation and Test Sets

We're trying to build a classification model, just we need a fashion to know how information technology's actually performing. Dividing the dataset into a grooming set and a test ready the tried-and-true method for doing this. We'll apply half of our information set every bit our training set, which will include the correct answers. And then we'll test our model using the other half of the information set without giving information technology the answers, to see how accurately it performs.

Conveniently, scikit-learn gives us a congenital-in function for doing this: train_test_split(). We just demand to tell it the feature set we want it to separate (X), the labels we desire it to test against (ylabels), and the size we want to use for the test set (represented as a per centum in decimal form).

from sklearn.model_selection import train_test_split X = df_amazon['verified_reviews'] # the features we want to clarify ylabels = df_amazon['feedback'] # the labels, or answers, nosotros desire to test against X_train, X_test, y_train, y_test = train_test_split(X, ylabels, test_size=0.three) Creating a Pipeline and Generating the Model

At present that we're all set up up, information technology's time to actually build our model! We'll start by importing the LogisticRegression module and creating a LogisticRegression classifier object.

Then, we'll create a pipeline with three components: a cleaner, a vectorizer, and a classifier. The cleaner uses our predictors course object to clean and preprocess the text. The vectorizer uses countvector objects to create the purse of words matrix for our text. The classifier is an object that performs the logistic regression to classify the sentiments.

Once this pipeline is built, nosotros'll fit the pipeline components using fit().

# Logistic Regression Classifier from sklearn.linear_model import LogisticRegression classifier = LogisticRegression() # Create pipeline using Pocketbook of Words pipage = Pipeline([("cleaner", predictors()), ('vectorizer', bow_vector), ('classifier', classifier)]) # model generation pipe.fit(X_train,y_train) Pipeline(retention=None, steps=[('cleaner', <__main__.predictors object at 0x00000254DA6F8940>), ('vectorizer', CountVectorizer(analyzer='give-and-take', binary=False, decode_error='strict', dtype=<grade 'numpy.int64'>, encoding='utf-8', input='content', lowercase=True, max_df=ane.0, max_features=None, min_df=1, ...ty='l2', random_state=None, solver='liblinear', tol=0.0001, verbose=0, warm_start=False))]) Evaluating the Model

Permit's take a look at how our model actually performs! We can practice this using the metrics module from scikit-larn. Now that we've trained our model, we'll put our test data through the pipeline to come upward with predictions. Then we'll use diverse functions of the metrics module to wait at our model'south accuracy, precision, and recollect.

- Accuracy refers to the per centum of the total predictions our model makes that are completely correct.

- Precision describes the ratio of true positives to truthful positives plus false positives in our predictions.

- Recall describes the ratio of truthful positives to truthful positives plus false negatives in our predictions.

The documentation links higher up offering more details and more precise definitions of each term, but the lesser line is that all three metrics are measured from 0 to 1, where one is predicting everything completely correctly. Therefore, the closer our model'south scores are to 1, the improve.

from sklearn import metrics # Predicting with a test dataset predicted = pipe.predict(X_test) # Model Accuracy print("Logistic Regression Accuracy:",metrics.accuracy_score(y_test, predicted)) print("Logistic Regression Precision:",metrics.precision_score(y_test, predicted)) impress("Logistic Regression Recall:",metrics.recall_score(y_test, predicted)) Logistic Regression Accurateness: 0.9417989417989417 Logistic Regression Precision: 0.9528508771929824 Logistic Regression Recall: 0.9863791146424518 In other words, overall, our model correctly identified a comment'southward sentiment 94.1% of the time. When information technology predicted a review was positive, that review was actually positive 95% of the time. When handed a positive review, our model identified it as positive 98.six% of the time

Resources and Side by side Steps

Over the class of this tutorial, we've gone from performing some very simple text analysis operations with spaCy to building our own machine learning model with scikit-learn. Of course, this is but the outset, and there'south a lot more that both spaCy and scikit-learn accept to offering Python data scientists.

Here are some links to a few helpful resource:

- Scikit-acquire documentation

- spaCy documentation

- Dataquest's Machine Learning Course on Linear Regression in Python; many other auto learning courses are also available in our Data Scientist path.

Acquire Python the Right Way.

Larn Python by writing Python code from day one, right in your browser window. It's the best way to learn Python — see for yourself with one of our lx+ free lessons.

Try Dataquest

Tags

Source: https://www.dataquest.io/blog/tutorial-text-classification-in-python-using-spacy/

0 Response to "Cashflow 101 Text Was Entered Incorrectly. Try Again. Type the Text"

Post a Comment